My Web Sync Engine is done 🚀

Or at least, I reached the point of diminishing returns. Therefore, it's release time!

Here is what went on behind the curtains, and what I learned 👇

Context: My sync engine is made for local-first (offline-first) and for single-users (not optimized for real-time collaboration) 👀

Your problem is data

A sync engine comes down to handling data flowing in multiple formats. And keeping all the data in sync.

At the beginning I started implementing my custom Event Sourcing conflict resolution system.

And then I stopped 🫠

The most critical and valuable piece of a sync engine is, well, data syncing 💁🏼♂️

Taking a bunch of data from multiple clients, and converging it all to a consistent (and reasonable) final state.

Everything else is moving and storing data around.

CRDT saved me

That's when I jumped into a pre-made solution: CRDTs with Loro.

By using a library I bypassed the problem of syncing data. The CRDT handles that for me 🫡

// Read current workspace (stored in database)

const workspace = yield* AuthWorkspace;

// Extract LoroDoc from sync API request

const doc: LoroDoc = yield* Schema.decode(SnapshotToLoroDoc)(snapshot);

doc.import(workspace.snapshot); // 👈 Syncing magic you don't need to implement

// Store new consistent snapshot in database

const newSnapshot = yield* Schema.encode(SnapshotToLoroDoc)(doc);All as easy as a single import call, loro-crdt takes care of the rest.

Note: Data is stored as bytes in the database (result of encoding

LoroDoc).

All offline by default

My solution is local-first at its core. The source of true is local data, the server only syncs+backups.

That's not true for all sync engines 🙌

This has some implications:

- A client can store/sync any previous schema version

- Schema and data migrations are performed on the client

The UI only reads from local storage, there are no requests to fetch data inside components.

Syncing (aka data fetching) is all performed on the background inside a Web Worker ⚡️

Auth lives on the server

My sync engine allows sharing a link to another device to it give access to my local data (called "workspace").

Authorization uses JWT tokens that are stored and verified on the server 🤝

It works like this:

- A client creates its own local workspace (it can make any change, even offline)

- The client tells the server to store the workspace and mark itself as "master"

- The server generates a "master token" for the master client

- The master client generates access tokens for other clients (with access scope and expiration date)

- The master client shares a link with another client to access its workspace

- The other client ask the server for access permission using the access token

In this model, the master client has full control on each shared token, but it's the server that handles authorization.

The master client can revoke tokens at any time 🔗

Migrations are, well, interesting

In my model data is stored as bytes, and then decoded with loro-crdt into LoroDoc.

Migrations therefore rely on mapping a previous LoroDoc to a new LoroDoc.

I achieve this by literally using a mapping function for each version upgrade:

// List of versions

export const Version = [1] as const;

export type Version = (typeof Version)[number];

// Migrations for each version upgrade (type-safe)

const migrations = {

1: (_) => SnapshotSchema.EmptyDoc(),

} satisfies Record<Version, (doc: LoroDoc) => LoroDoc>;Every time the schema changes (SnapshotSchema), I add a new Version and a migration function from LoroDoc to LoroDoc.

SnapshotSchemainternally stores its own current version. The client performs a full migration to the latest version every time the app loads ⚡️

No queries on the server

The server is not designed for queries (by design 💁🏼♂️).

The server only stores bytes. Therefore, it works for any app. It's completely client-agnostic (shared between multiple apps with different schemas)

As mentioned, the server syncs and backups, nothing more. Queries and joins are defined on the client.

Conclusion: A sync engine is not a "library"

That's my main take away from this (long) process:

A sync engine is so fundamental, that you cannot expect to just "install a sync engine library" 🙌

The shape of your data, how it's stored, how it's fetched. A sync engine needs to control so much of your architecture that it's not possible to give a "simple" library to do all of it.

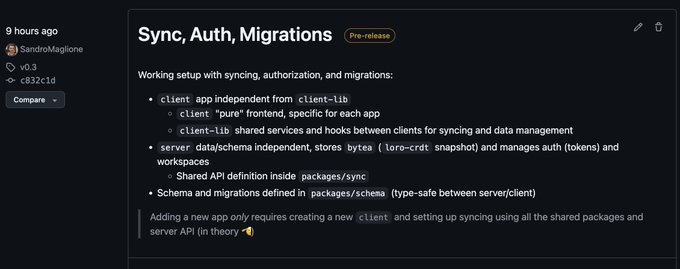

My final project is a monorepo with 2 apps (client/server) and 3 packages.

Whatever sync engine you choose, remember you are along for the ride: it will impact all your stack and architecture

Building blocks for Web Sync Engine ⚡️ Sync/Auth Server API 🧱 Schema and migrations 🔗 client/server implementations Close to public release, everything working as expected 🔜

1 week to the Effect Days.

The next 2 weeks I will bring you the latest and greatest from the conference.

As well as my experience as a speaker: behind the curtains of how it all started, how I prepared, and how I performed 👀

See you next 👋